Hi, I'm facing a really weird behavior between VertexArrays and Vertex shader :

Given the same 3 Vertices, a VertexArray doesn't set the same Vertex Shader "gl_Vertex" value depending on the VertexArray size.

For VertexArrays of size 5 upwards, gl_Vertex seems to contain word coordinates (as expected), but for VertexArrays of size up to 4, gl_Vertex seems to contain coordinates centered and aligned with the window.

I've been able to reproduce with a minimal example described bellow BUT in rust-sfml, so maybe the issue lies there... Anyway, I'm thinking if anyone could try to reproduce in native C++ it would be helpfull to corner the issue.

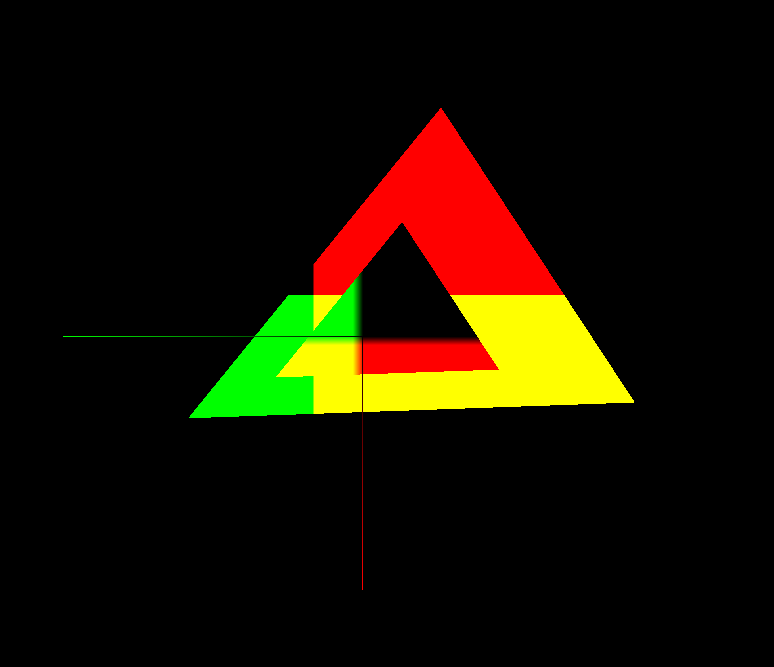

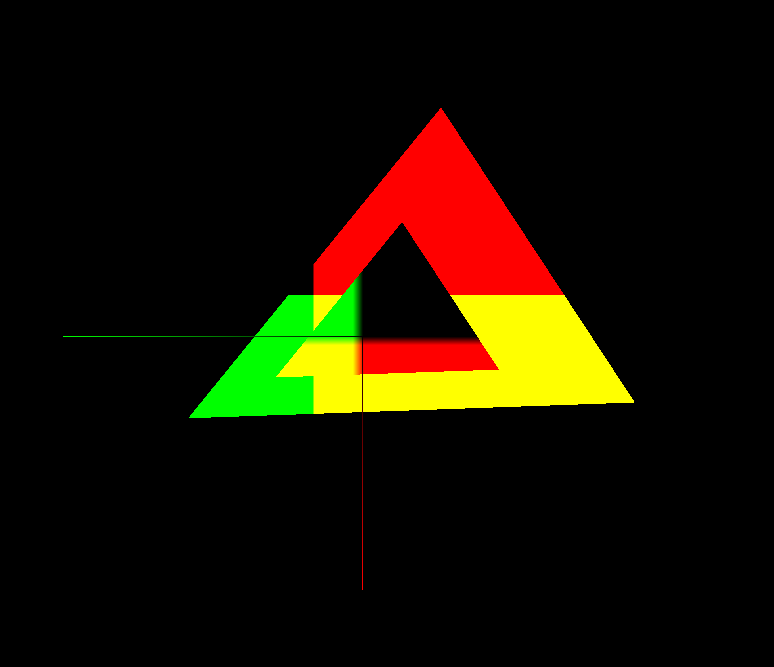

On this image there are three VertexArrays :

- one line strip representing x and y axes (red and green)

- one large triangle, a vertex array declared with size 3

- one small triangle, a vertex array declared with size 5

Both triangles are made of the same coordinates centered on origin, just scaled differently. They are drawn using the same shader.

The render state is translated (diagonal), rotated (90deg) and scaled (x10) to reveal the issue.

The Vertex shader passes the gl_Vertex coordinates to the fragment shader which maps x and y to the gl_FragColor red and green.

As you can see, the small triangle displays coordinates rotated 90deg, still centered on itsef, and the transition between colors is blured on 10 pixels, as expected. The large triangle on the other side displays colors as if no changes were made to the render state.

Thanks for reading and for your help!

Here is the source code if you want to check more things:

Rust :fn minimal_bug_reproduction

() { let mut window

= RenderWindow

::new( Game

::WINDOW_STARTING_RESOLUTION,

"Vertex Array issue with shader and gl_Vertex.xy",

Style::DEFAULT,

&ContextSettings

::default(),

); window.

set_view(&View::new((0.,

0.

).

into(), Vector2f

::new(1500.,

1000.

))); let rotate

= |vector

: Vector2f, sin_cos

: (f32, f32

)| { let

(sin, cos

) = sin_cos

; Vector2f

::new( vector.

x * cos

- vector.

y * sin, vector.

y * cos

+ vector.

x * sin

) }; let shader

= Shader

::from_file

(Some

("resources/shaders/dummy_vertex.glsl"), None, Some

("resources/shaders/triangle_fragment.glsl")); let mut render_state

= RenderStates

::default(); render_state.

transform.

translate(50.,

50.

); render_state.

transform.

rotate(90.

); render_state.

transform.

scale(10.,

10.

); render_state.

shader = shader.

as_ref(); let mut angle

= 0_f32

; let angle_speed

= 0.5_f32

; let left_pos

= Vector2f

::new(0.,

0.

); let right_pos

= Vector2f

::new(0.,

0.

); let mut up_shape

= VertexArray

::new(PrimitiveType

::LineStrip,

3); up_shape

[0] = Vertex

::with_pos_color

(Vector2f

::new(30.,

0.

),

Color::RED

); up_shape

[1] = Vertex

::with_pos_color

(Vector2f

::new(0.,

0.

),

Color::BLACK

); up_shape

[2] = Vertex

::with_pos_color

(Vector2f

::new(0.,

30.

),

Color::GREEN

); let size_1

= 20.

; let mut shape_1

= VertexArray

::new(PrimitiveType

::Triangles,

3); shape_1

[0] = Vertex

::with_pos

(Vector2f

::new(-size_1,

-size_1

) + left_pos

); shape_1

[1] = Vertex

::with_pos

(Vector2f

::new(size_1,

-size_1

) + left_pos

); shape_1

[2] = Vertex

::with_pos

(Vector2f

::new(0., size_1

) + left_pos

); let size_2

= 10.

; let mut shape_2

= VertexArray

::new(PrimitiveType

::Triangles,

5); shape_2

[0] = Vertex

::with_pos

(Vector2f

::new(-size_2,

-size_2

) + right_pos

); shape_2

[1] = Vertex

::with_pos

(Vector2f

::new(size_2,

-size_2

) + right_pos

); shape_2

[2] = Vertex

::with_pos

(Vector2f

::new(0., size_2

) + right_pos

); let mut clock_updates

: Clock

= Clock

::start

(); let mut delta_time_physics

: Time = Time::ZERO

; let mut delta_time_update

: Time = Time::ZERO

; while window.

is_open() { let delta_time

= clock_updates.

restart(); delta_time_physics

+= delta_time

; delta_time_update

+= delta_time

; while delta_time_physics.

as_seconds() >= Game

::SIMULATION_TIME_STEP as f32

{ delta_time_physics

-= Time::seconds

(Game

::SIMULATION_TIME_STEP as f32

); angle

+= angle_speed

* Game

::SIMULATION_TIME_STEP as f32

; shape_1

[0] = Vertex

::with_pos

(rotate

(Vector2f

::new(-size_1,

-size_1

), angle.

sin_cos()) + left_pos

); shape_1

[1] = Vertex

::with_pos

(rotate

(Vector2f

::new(size_1,

-size_1

), angle.

sin_cos()) + left_pos

); shape_1

[2] = Vertex

::with_pos

(rotate

(Vector2f

::new(0., size_1

), angle.

sin_cos()) + left_pos

); shape_2

[0] = Vertex

::with_pos

(rotate

(Vector2f

::new(-size_2,

-size_2

), angle.

sin_cos()) + right_pos

); shape_2

[1] = Vertex

::with_pos

(rotate

(Vector2f

::new(size_2,

-size_2

), angle.

sin_cos()) + right_pos

); shape_2

[2] = Vertex

::with_pos

(rotate

(Vector2f

::new(0., size_2

), angle.

sin_cos()) + right_pos

); } while let Some

(event

) = window.

poll_event() { match event

{ Event::Closed

=> window.

close(),

Event::KeyPressed

{ code

: Key::Escape, ..

} => window.

close(),

_

=> {} } } window.

clear(Color::BLACK

); window.

draw_vertex_array(&shape_1, render_state

); window.

draw_vertex_array(&shape_2, render_state

); render_state.

shader = None

; window.

draw_vertex_array(&up_shape, render_state

); render_state.

shader = shader.

as_ref(); window.

display(); }} #version 120

varying vec2 io_world_position;

void main() {

// transform the vertex position

gl_Position = gl_ModelViewProjectionMatrix * gl_Vertex;

io_world_position = gl_Vertex.xy;

// transform the texture coordinates

gl_TexCoord[0] = gl_TextureMatrix[0] * gl_MultiTexCoord0;

// forward the vertex color

gl_FrontColor = vec4(gl_Vertex.xy,0.,1.);

}

fragment glsl:#version 120

varying vec2 io_world_position;

void main() {

gl_FragColor = vec4(io_world_position, 0., alpha);

}